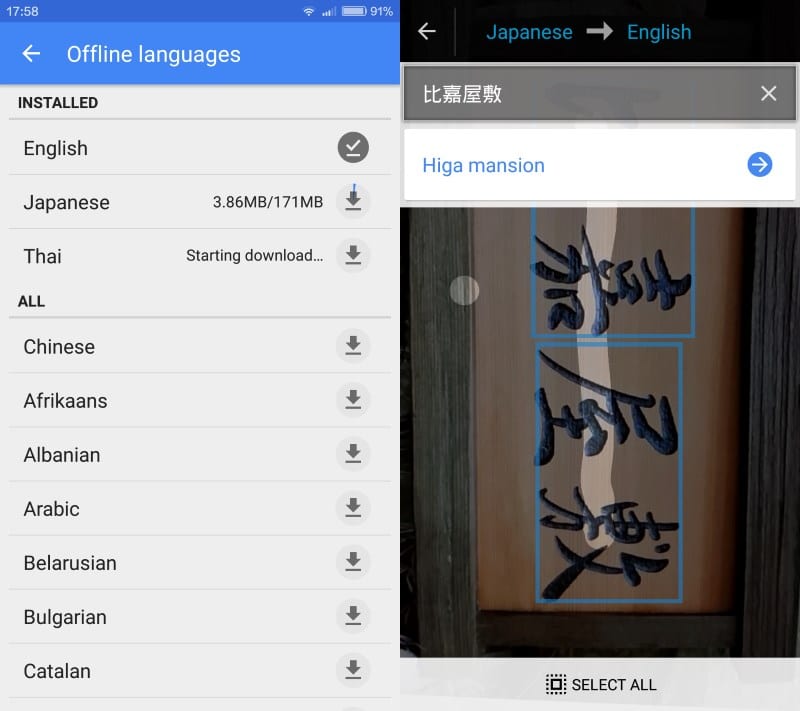

Ahead of that, Google Translate has replaced its built-in translation camera with Google Lens.īesides visual search that has various shopping, object, and landmark identification use cases, Google Lens is good at lifting text for real-world copy and paste. Google Research has more about the work in a new blog post.Back in September, Google previewed a new AR Translate feature for Lens that takes advantage of the technology behind the Pixel’s Magic Eraser. That way, the computing can happen on a mobile phone with limited processing power and little if any connection to the Internet. But Google built a very small neural network and a carefully curated training data set. Historically, this sort of complex processing would happen in a remote data center, scaled out onto several servers.

From there, the Google Translate app looks up the letters in order to make an inference, or educated guess, about the words that the mobile device’s camera was pointed at. Google has trained its artificial neural network - a key technology for deep learning - on images showing letters as well as on fake images marred by imperfections, to simulate real-life scenes. Google Translate can do this by relying on an increasingly trendy type of artificial intelligence called deep learning. From there, the app can work even when the mobile device it’s running on has no Internet connection.

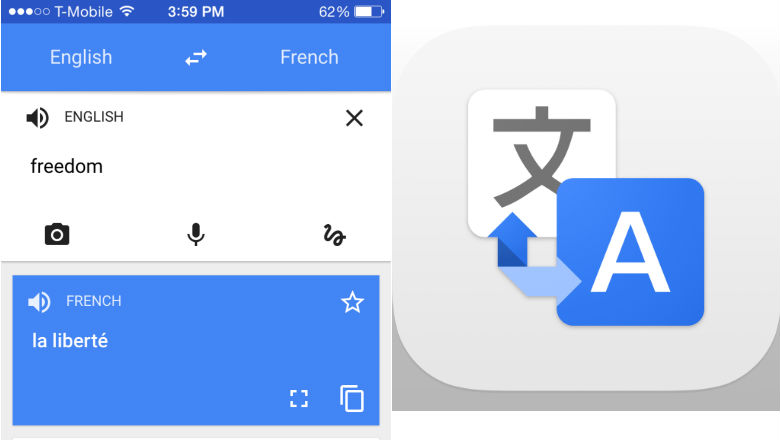

People using the app can try out the support for the new languages by downloading a language pack for each language. The news comes six months after Google first introduced instant visual translation in Translate, and a little over a year after Google’s acquisition of Quest Digital.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed